vSphere Supervisor Services

Supervisor Services

Explore VCF services purpose-built to accelerate modern application delivery and elevate private cloud consumption, with new capabilities continuously added to expand your service portfolio.

Table of Contents

Install Supervisor Services

📢 Download links for Service artifacts have moved to support.broadcom.com

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Starting VCF 9.1.0, the compatible service versions with which Supervisor versions can be found in Interoperability Matrix.

Prior to vSphere 8 Update 1, the Supervisor Services are only available with Supervisor Clusters enabled using VMware NSX-T. With vSphere 8 U1, Supervisor Services are also supported when using the vSphere Distributed Switch (VDS) networking stack.

| Supervisor Service | vSphere 7 | vSphere 8 | VCF 9 |

|---|---|---|---|

| vSphere Kubernetes Service | ❌ * | ✅ requires vSphere 8.0 Update 3 or later |

✅ |

| Local Consumption Interface | ❌ | ✅ requires vSphere 8.0 Update 3 or later |

✅ |

| vSAN Data Persistence Platform Services - MinIO | ✅ | ✅ | ✅ |

| Backup & Recovery Service - Velero | ✅ | ✅ | ✅ |

| CA Cluster Issuer | ❌ | ✅ | ✅ |

| Cloud Native Registry Service - Harbor | ❌ * | ✅ | ✅ |

| Kubernetes Ingress Controller Service - Contour | ❌ | ✅ | ✅ |

| External DNS Service - ExternalDNS | ❌ | ✅ | ✅ |

| NSX Management Proxy | ❌ | ✅ requires vSphere 8.0 Update 3 or later with Supervisor Clusters enabled using VMware NSX-T |

✅ Use Supervisor Management Proxy instead |

| Supervisor Management Proxy | ❌ | ❌ | ✅ |

| Data Services Manager Consumption Operator | ❌ | ✅ requires vSphere 8.0 Update 3 or later with additional configuration. Please contact Global Support Services (GSS) for the additional configuration |

✅ |

| Secret Service | ❌ | ❌ | ✅ |

| ArgoCD Service | ❌ | ✅ requires vSphere 8.0 Update 3g or later with a VCF entitlement |

✅ requires 'vSphere Supervisor 9.0.0.0100'/'VCF 9.0.1.0' or later |

| SRE Supervisor Role Service | ❌ | ✅ requires vSphere 8.0 Update 3g or later |

✅ |

* The embedded Harbor Registry and vSphere Kubernetes Service features are still available and supported on vSphere 7 and onwards.

How to find and install Supervisor Services

-

Log in to support.broadcom.com

-

Select

Softwareon the left hand side navigation, and click onEnterprise Software, then click onMy Downloads.

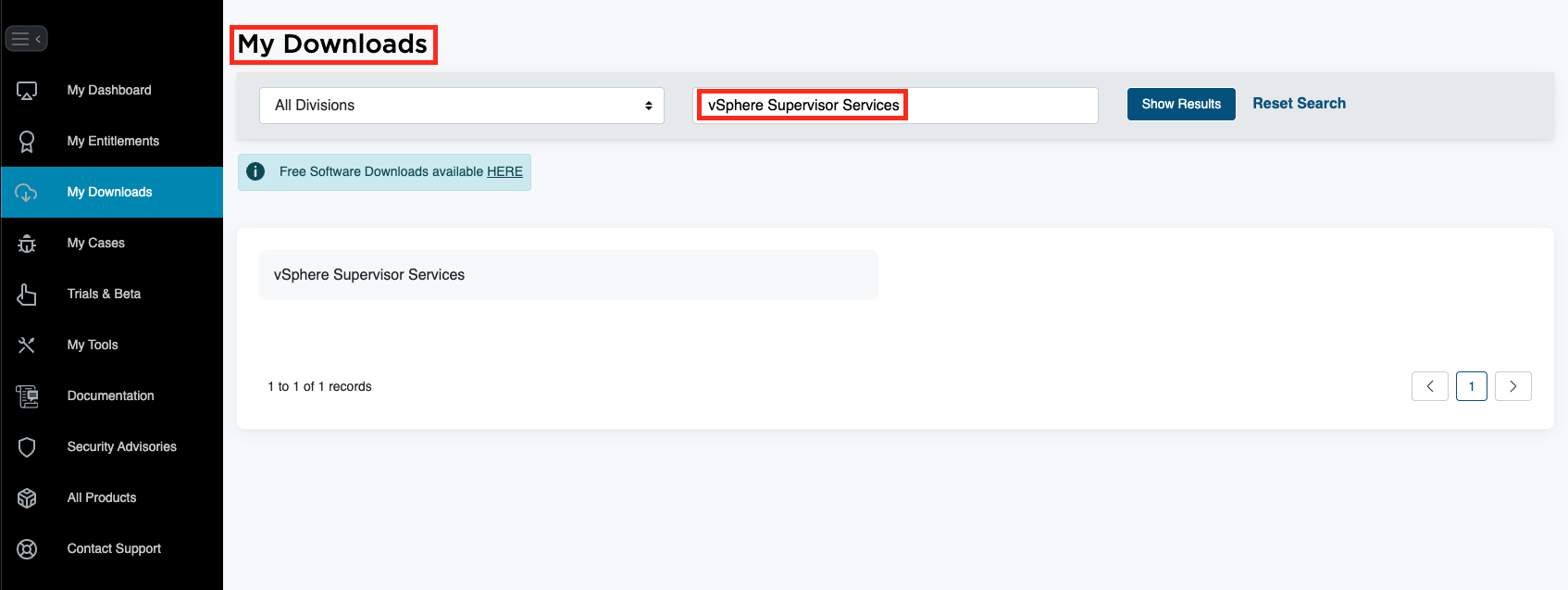

- All services are entitled under VCF or legacy SKUs and can be downloaded directly from

My Downloads. Search forvSphere Supervisor ServicesunderMy Downloads.

-

If you are looking to download VMware Private AI Services, go to

My Downloadsand then search forVMware Private AI Services. -

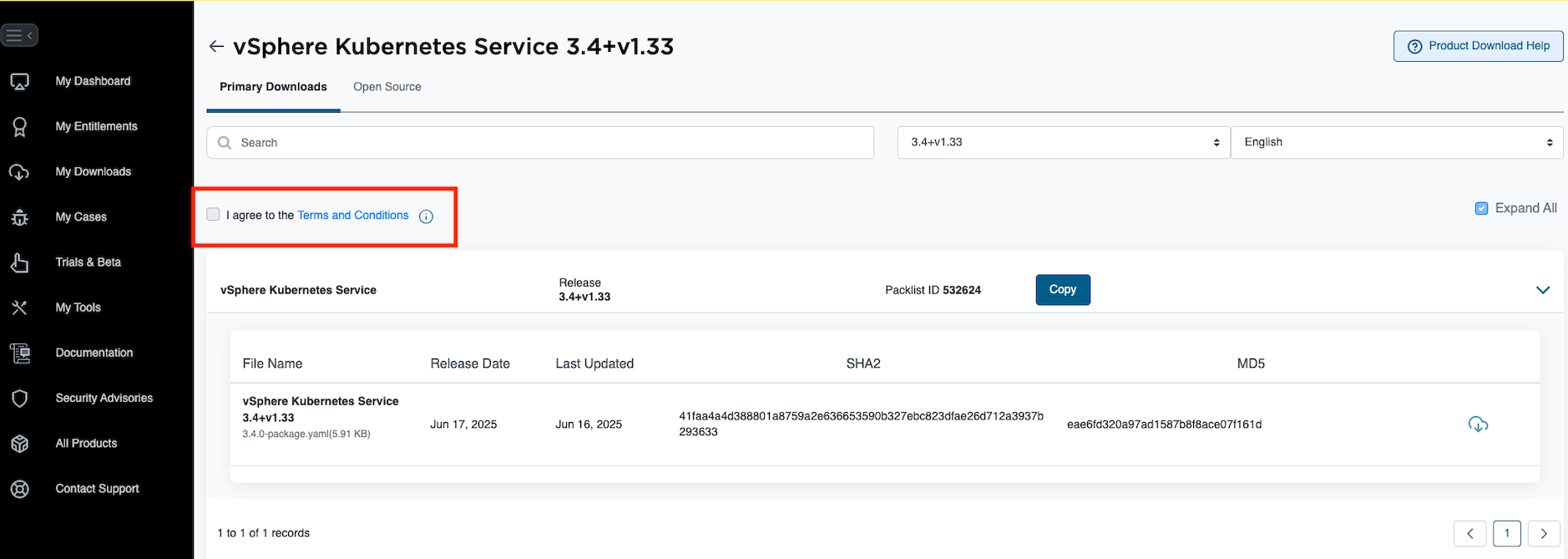

Next navigate to the service of choice and version you are looking to install.

Please check Interoperability Matrix to find out which service version is compatible with which Supervisor version.

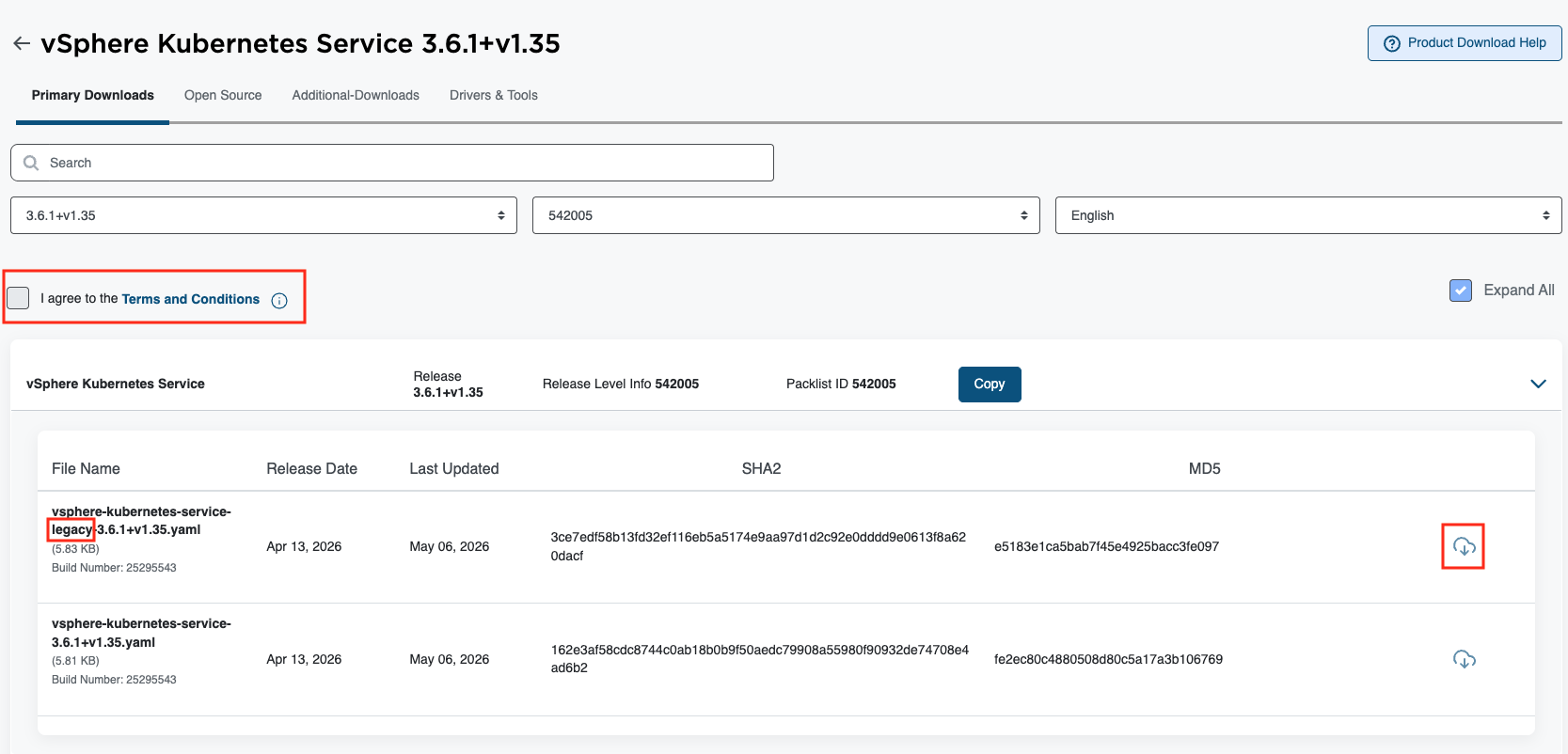

- To download files, first you need to click

Terms and Conditionsto activate the checkbox.

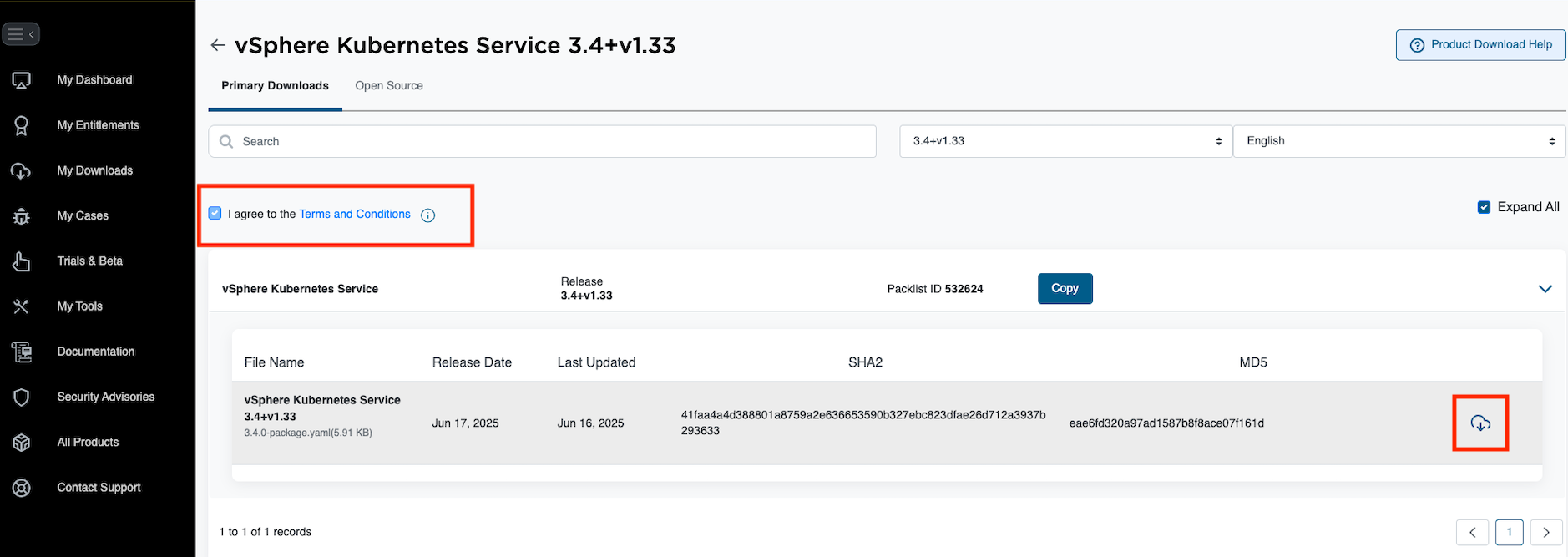

- Go through the

Terms and Conditions. Once you agree, select the checkbox to activate the download icon.

- Click on the download icon on the service definition as well as any additional files (such as values.yaml files, etc.)

⚠️ Services released with VCF 9.1.0 or later may include two YAML definitions: a 'legacy' version (file name contains word

legacy) for pre-9.1.0 VCF deployments or air-gapped VCF environments, and a standard version for VCF 9.1.0+ deployments with VCF Software Depot supported.

⚠️ Service configuration value fields may change for each version. If a

values.yamlmanifest is available under the selected service version, consider downloading it, editing it if needed, and providing it when installing or upgrading to that service version.

- You can now proceed to install your service.

Supervisor Services Catalog

- vSphere Kubernetes Service (VKS)

- Local Consumption Interface

- vSAN Data Persistence Platform (vDPP) Services

- Backup & Recovery Service

- CA Cluster Issuer Service

- Cloud Native Registry Service

- Kubernetes Ingress Controller Service

- External DNS Service

- Supervisor Management Proxy

- NSX Management Proxy

- Data Services Manager Consumption Operator

- Secret Store Service

- ArgoCD Service

- SRE Supervisor Role

vSphere Kubernetes Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

VMware vSphere Kubernetes Service (VKS, formerly known as the VMware Tanzu Kubernetes Grid Service or TKG Service) lets you deploy Kubernetes workload clusters on the vSphere Supervisor (formerly known as the vSphere IaaS control plane). Starting with vSphere 8.0 Update 3, VKS is installed as a Supervisor Service. This architectural change decouples VKS from Supervisor releases and lets you upgrade VKS independently of vCenter Server and Supervisor.

- Service install documentation

- Latest API reference

vSphere Kubernetes Service Versions

The Interoperability Matrix shows each VKS version below, including compatible Kubernetes releases and the vCenter Server versions containing compatible Supervisor versions. Please refer to VKS release notes documentation for newer VKS releases. Note that some compatible Kubernetes releases may have reached End of Service; refer to the Product Lifecycle tool (Division: "VMware Cloud Foundation", Product Name: "vSphere Kubernetes releases") to view End of Service dates for Kubernetes releases.

- VKS v3.4.0

- VKS v3.3.3

- VKS v3.3.2

- VKS v3.3.1

- VKS v3.3.0

- VKS v3.2.0

- VKS v3.1.1

- VKS v3.1.0

Local Consumption Interface

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Provides the Local Consumption Interface (LCI) for Namespaces within vSphere Client.

The minimum required version for using this interface is vSphere 8 Update 3.

** IMPORTANT NOTICE **: You must uninstall version 1.0.x before using the 9.0.0 version of LCI. Failure to do so will result in the interface not starting correctly when looking at the Resources tab for a namespace.

Local Consumption Interface Versions

Installation instructions for installing the supervisor service can be found in the VCF documentation sites.

- Version:

- 9.1.0

- 9.0.2 Release notes

- 9.0.1 Release notes

- 9.0.0 Release notes

- 1.0.2 Release notes

OSS information

OSS information is available on the Broadcom Customer Portal.

vSAN Data Persistence Platform (vDPP) Services

Please refer to How to find and install Supervisor Services to find and install supervisor services.

vSphere Supervisor offers the vSAN Data Persistence platform. The platform provides a framework that enables third parties to integrate their cloud native service applications with underlying vSphere infrastructure, so that third-party software can run on vSphere Supervisor optimally.

- Using vSAN Data Persistence Platform (vDPP) with vSphere Supervisor documentation

- Enable Stateful Services in vSphere Supervisor documentation

Available vDPP Services

- MinIO partner documentation

- Version:

- 2.0.10

- Version:

Backup & Recovery Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Velero vSphere Operator helps users install Velero and its vSphere plugin on a vSphere with Kubernetes Supervisor cluster. Velero is an open source tool to safely backup and restore, perform disaster recovery, and migrate Kubernetes cluster resources and persistent volumes.

- Service install documentation

Velero vSphere Operator CLI Versions

This is a prerequisite for a cluster admin install.

- Velero vSphere Operator CLI versions:

- v1.8.1

- v1.8.0

- v1.6.1

- v1.6.0

- v1.5.0

- v1.4.0

- v1.3.0

- v1.2.0

- v1.1.0

Velero Versions

- Velero vSphere Operator versions:

- v1.8.1

- v1.8.0

- v1.6.1

- v1.6.0

- v1.5.0

- v1.4.0

- v1.3.0

- v1.2.0

- v1.1.0

CA Cluster Issuer Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

ClusterIssuers are Kubernetes resources that represent certificate authorities (CAs) that are able to generate signed certificates by honoring certificate signing requests. All cert-manager certificates require a referenced issuer that is in a ready condition to attempt to honor the request.

- Service install - Follow steps 1 - 5 in the documentation then continue to the bullet point below.

- Read Service Configuration to understand how to install your root CA into the ca-clusterissuer.

CA Cluster Issuer Versions

- v0.0.2

- v0.0.1

CA Cluster Issuer Sample values.yaml

- We do not provide any default values for this package. Instead, we encourage that you generate certificates. Please read How-To Deploy a self-signed CA Issuer and Request a Certificate for information on how to create a self-signed certificate. )

Cloud Native Registry Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Harbor is an open source trusted cloud native registry project that stores, signs, and scans content. Harbor extends the open source Docker Distribution by adding the functionalities usually required by users such as security, identity and management. Having a registry closer to the build and run environment can improve the image transfer efficiency. Harbor supports replication of images between registries, and also offers advanced security features such as user management, access control and activity auditing.

- Since v2.11.2, Harbor supervisor service supports exposing the registry using a load balancer. Contour is optional, depending on the "enableNginxLoadBalancer" and "enableContourHttpProxy" settings.

- Follow the instructions under Installing and Configuring Harbor on a Supervisor.

Harbor Versions

- v2.14.3

- v2.14.2

- v2.13.1

- v2.12.4

Harbor Sample values.yaml

Sample values can be downloaded from the same location as Service yamls.

- Version: values for v2.14.3 - For details about each of the required properties, see the configuration details page.

- Version: values for v2.14.2 - For details about each of the required properties, see the configuration details page.

- Version: values for v2.13.1 - For details about each of the required properties, see the configuration details page.

- Version: values for v2.12.4 - For details about each of the required properties, see the configuration details page.

Kubernetes Ingress Controller Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Contour is an Ingress controller for Kubernetes that works by deploying the Envoy proxy as a reverse proxy and load balancer. Contour supports dynamic configuration updates out of the box while maintaining a lightweight profile.

- Service install - Follow steps 1 - 5 in the documentation.

Contour Versions

- v1.33.1

- v1.32.0

- v1.31.1

- v1.30.3

- v1.29.3

- v1.28.2

Contour Sample values.yaml

- Sample values can be downloaded from the same location as service yaml. These values can be used as-is and require no configuration changes.

External DNS Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

ExternalDNS publishes DNS records for applications to DNS servers, using a declarative, Kubernetes-native interface. This operator connects to your DNS server (not included here). For a list of supported DNS providers and their corresponding configuration settings, see the upstream external-dns project.

- On Supervisors where Harbor is deployed with Contour, ExternalDNS may be used to publish a DNS hostname for the Harbor service.

ExternalDNS Versions

- v0.18.0

- v0.16.1+vmware.2

- v0.14.2

ExternalDNS data values.yaml

- Because of the large list of supported DNS providers, we do not supply complete sample configuration values here. If you're deploying ExternalDNS with Harbor and Contour, make sure to include

source=contour-httpproxyin the configuration values. An incomplete example of the service configuration is included below. Make sure to setup API access to your DNS server and include authentication details with the service configuration. - Enabled RFC2136 TLS Connection Configuration from release 0.16.1. Detailed parameters refer to external-dns/README-0.16.1.md.

- Enabled RFC2136 deployment container cpu & memory resources configurable from release 0.18.0. Detailed parameters refer to external-dns/README-0.18.0.md.

---

#! use tlsConfig to enable TLS connection with BIND server

#! and require BIND server enables TLS meanwhile

#! if want to keep using nonTLS connection, comment or remove the tlsConfig part

tlsConfig:

tls_enable: true

#! User requires to provide base64 encryption format of root ca.crt value

#! which uses to validate tls connection with DNS server once tls_enable=true

ca_crt: "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUZwekNDQTQrZ0F3SUJBZ0lVZmxLT3I0UkhickVzMG1ucTZKaTE4Y3FqRUQwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1l6RUxNQWtHQTFVRUJoTUNWVk14RGpBTUJnTlZCQWdNQlZOMFlYUmxNUTB3Q3dZRFZRUUhEQVJEYVhSNQpNUlV3RXdZRFZRUUtEQXhQY21kaGJtbDZZWFJwYjI0eERUQUxCZ05WQkFzTUJGVnVhWFF4RHpBTkJnTlZCQU1NCkJsSnZiM1JEUVRBZUZ3MHlOVEEwTWpnd056UTBNRFZhRncwek5UQTBNall3TnpRME1EVmFNR014Q3pBSkJnTlYKQkFZVEFsVlRNUTR3REFZRFZRUUlEQVZUZEdGMFpURU5NQXNHQTFVRUJ3d0VRMmwwZVRFVk1CTUdBMVVFQ2d3TQpUM0puWVc1cGVtRjBhVzl1TVEwd0N3WURWUVFMREFSVmJtbDBNUTh3RFFZRFZRUUREQVpTYjI5MFEwRXdnZ0lpCk1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQ0R3QXdnZ0lLQW9JQ0FRQzEvY3JmTTF1SGNqdy9HWWpJL3BRcmtTblEKRWZuRTN2byt3R1RTNkxUM0JvdmliTzR6UUpTM2E3STZoaytvdzNYMHlxVklwZUVyVFNtT08wR0xlMUZFTFdHbgpZZHM4UldwZ3dvZUhUN1NlSUVGSnFGMko2bFFKRmFXem5zUlhGdk4rbXlpWk41T2o1Q0w4akViSmo0Wkx3Q0ZOClZQV1dWTDZXSzdpaW9WK2tKRXdFMWp5SHVYbXZVQVlCVk9NZlZsa0ptb01mcy9ZL2RKbXJBb1Z6VlVsc1JzRGEKVTAweUhmdHE2cm83YXFMVXJKaTdjM1FqU3BGTTVTVkVVY0UwUjdQVFhMOGFXcXV5RVI3bWUySjBlMEgvMk5jMwpJVm5ONlNiZURwMS9PdTA3eGxncUZ1UXEzTjNwcXl1VEpSWW9XT0dHd1I2Q3J4L0pkUW4zbU90SFVDMEtwZTJwCi92eTNkVnFHN3ErZ3JwN0pBUFZ2Vkh4dmdYYkJka0RMYmxaMjZQOElxMUp6TTI5ZExRdjFqUnVMNFRmSG5xS0EKZmNKeWU5eWJmeWxkd0dGR2M1VXFPaHB1L1ZOak5lVDNtNHlTSnhCVmZ1Q1phZTBDaWZOMWZ1Nk1TdzJWRU4xbgpOWjdpODBpdTE0ZFAwenFKUnRoSHB6SisxYUx5WGpoSWN3YkZHRkNrODNtSHFadjYzT0owbllKY3ZIL2EvSDRtCm5Gdlcrcmh3TGx6R1JLOWMrWS8rOENNMC9FS3VVd21saWNhZWZwTVE1c1BEak5aVk5TeTMycFJlSmt6TTNvbWsKN21EK1hnT2JpbDZXMnNPb09lYkVzSm15Z0x1RlFJZ0s2NTJHbVoyNkJjc25rWmlKVXJYVFo1N2E5MnZvTlhvQwplUEtKSDZqOWxsTi8wQllrYVFJREFRQUJvMU13VVRBZEJnTlZIUTRFRmdRVXBtdnBBRHVWK0NVYjZ2eUNZeng5CjVLQlQ2ekl3SHdZRFZSMGpCQmd3Rm9BVXBtdnBBRHVWK0NVYjZ2eUNZeng5NUtCVDZ6SXdEd1lEVlIwVEFRSC8KQkFVd0F3RUIvekFOQmdrcWhraUc5dzBCQVFzRkFBT0NBZ0VBSThYTk8yQ0xvNWRDc1V6MEZEVTlZUkduT2Z1NApxWGVWOEg4K1czc290L2hBNk5BdGtvcUZqUUpWVmt5QVI4R3NtdnkxUFRRQlVxRTAvbE1DeWdNbGxwMVlwZnhNCmhwSUNWalp4bVZwQjBiTmpMMnJ5WWlrTGdjQVEzYTd5aWphTUFvaUlyREdxYVNodVVoMFFOWmVLUWxMdEJ3OTMKdFR3dGlQMjRFU0s3a05TOXR3blgwYWU0UTJLK09rdzZVQkVyd1h0TnZrKzBGUDAxcVJ4MXFsQ01CeCtqTHFiTgpwZ21PYjFxNFFQSGt4NzEvai9Gdm8xTUdRZm5McEJXakdaMmIvMW5FWEVvMHhVL3llYnpTYTBZT3Y3ZGptcjNqCkp5bHg2RkQySEpCS1ZsT0tBek1rNmlyaUpVeExDUnNSZlI1NEU3NG1TY0NVVXp1OS9UTTYwN2NFNmw4ZVB1VEEKL2FOMFlLeDV3ZEJkSkVadkxyUzVORTZpUjJ6WnVHamJTZFRWLzJFb3Zlc0pPQ1FkUGhYTm12T3lWK280TTRieQpKZ0U2WWxKWldnekVHa0Y2TWQvN3RmaG1BMDMxUUc4NnZqdVJVMWhWSC8vRmF2K3lac0NBRnBBYlNua004czdUCjNQQkxDOWFOSkFOOVVySTRnWGg2aWJFUGRMTnYwdE1hR0JwWDBVMFdub1R0WnlaMGJhVVlSUW90Ly94MGgvQU0KdnRFZlNtYlh0ZlZKZERqZHByTER0emxZWXhOTjVUWHdOTEpNYWJFekowVUV5ZE1NZGJocFN4cXJ4elFNWkhvSwpibFlzWS9hNFJVQXI4NVVjdC8xcjFlR0I0U2dUcVk0aTNMd1p6N2I3SHRZaUZQdmZ0SW5XWnJUbk0rQ2NwTmwzCnV5WUxhQThkd25QQWR1OD0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo="

deployment:

args:

- --source=contour-httpproxy

- --source=service

- --log-level=debug

#! Re-define the resource quota of external-dns pod/container if need

resources:

requests:

cpu: "150m"

memory: "256Mi"

limits:

cpu: "300m"

memory: "512Mi"

Validated Supported DNS Server Example:

- RFC2136 BIND DNS Server: Sample values can be downloaded from the same location as service.yaml. Replace the values indicated by the comments with your own DNS server details.

ExternalDNS Package Limitations

Use the External-DNS Supervisor Service for shared Supervisor cluster deployments. External-DNS is not suitable for multi-tenancy because it does not provide the isolation that is required for tenant-specific use cases.

For example, we have 2 zones in same provide DNS server: t1.k8s.com for tenant1 & t2.bc.com for tenant2, current External-DNS workflow is:

A. tenant1 user only can use zone t1.k8s.com

B. tenant2 user only can use zone t2.bc.com

C. If tenant2 try to use t1.k8s.com or tenant1 user try to use t2.bc.com both are allowed and records will be reconciled and updated accordingly

Also can refer to diagram shows: external-dns/package-limitation_multi-tenancy.png

Supervisor Management Proxy

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Supervisor Management Proxy is required when there is isolation between management network and workload network and the VKS clusters in workload network cannot reach components running in management network.

Supervisor Management Proxy supports following usecases:

- Antrea-NSX adapter in VKS cluster to reach NSX manager. (Same as NSX Management Proxy)

- Send metrics from VKS Clusters to VCF Ops when monitoring VKS Clusters in VCF Ops.

- Callback into VKSM from auto-attach service and VKS clusters. (added in v0.4.0)

- Allow DPCA, VCFA and VC to be connected from the workload network. (added in v0.4.0)

Supervisor Management Proxy Versions

- v0.4.1 (requires vSphere 9.1.0 or later)

- v0.4.0 (requires vSphere 9.0.1 or later)

- v0.3.0 (requires vSphere 9.0 or later)

Supervisor Management Proxy Sample values.yaml

- Download sample values.yaml from the same location as Service yaml.

- For Antrea-NSX usecase, make sure to fill the property

nsxManagerswith your NSX Manager IP(s). - For other usecases, no need to fill any property in data values to configure the Supervisor Management Proxy.

NSX Management Proxy

Please refer to How to find and install Supervisor Services to find and install supervisor services.

NSX Management Proxy is for Antrea-NSX adapter in Kubernetes clusters deployed by VKS to reach NSX manager. NSX Management Proxy is used when there is isolation between management network and workload network and the VKS clusters cannot reach NSX manager.

We recommend to use Supervisor Management Proxy over NSX Management Proxy. The two proxies cannot be used together. If you are already using NSX Management Proxy, consider migrating to Supervisor Management Proxy for additional usecases. Contact Broadcom support for migration steps.

NSX Management Proxy is deprecated since vSphere 9.0. We still maintain it for vSphere 8.0.* - 9.0.*, but will not maintain it for future vSphere releases.

NSX Management Proxy Versions

- For vSphere 8.0 Update 3 or later

- v0.2.2

- For vSphere 8.0 Update 3

- v0.2.1

- v0.1.1

NSX Management Proxy Sample values.yaml

- Download sample values.yaml from the same location as Service yaml. Make sure to fill the property

nsxManagerswith your NSX Manager IP(s).

Note: NSX Management Proxy is supported in vSphere 8.0 Update 3 when Supervisor Clusters are enabled using VMware NSX-T networking stack under following configurations:

- NSX Load Balancer is configured as load balancing solution.

- NSX Gateway Firewall is enabled.

Data Services Manager Consumption Operator

Please refer to How to find and install Supervisor Services to find and install supervisor services.

The Data Services Manager(DSM) Consumption Operator facilitates native, self-service access to DSM within a Kubernetes environment. It exposes a selection of resources supported by the DSM provider, allowing customers to connect to the DSM provider from Kubernetes. Although the DSM provider does not currently support tenancy natively, the DSM Consumption Operator enables customers to seamlessly integrate their existing tenancy model, effectively introducing tenancy into the DSM provider.

- The DSM provider is a prerequisite for DSM consumption operator, so that must be installed first.

- Installation instructions can be found here in VMware documentation

- Configuration instructions can be found here in VMware documentation.

Data Services Manager Consumption Operator Versions

- v9.1.0.0

- v9.0.0.0

- v2.2.1

- v2.2.0

- v1.2.0

- v1.1.2

- v1.1.1

- v1.1.0

Data Services Manager Consumption Operator Sample values. yaml

-

Sample values can be found at the same location as service yaml

-

v9.0.0.0: For details about each of the required properties, see the configuration details page.

-

v2.2.1 For details about each of the required properties, see the configuration details page.

-

v2.2.0 For details about each of the required properties, see the configuration details page.

-

v1.2.0 For details about each of the required properties, see the configuration details page.

Installation Note:

- DSM Consumption Operator v9.0.0.0

You should add a new field isSupervisor in dsm section of the values yaml and its value should always be bool true. If you encounter any issues related to the Service-id, please contact Global Support Services (GSS) for immediate assistance. - DSM Consumption Operator v2.2.X

When installing DSM Consumption Operator v2.2.X as a Supervisor Service, if you encounter any issues related to the Service-id, please contact Global Support Services (GSS) for immediate assistance.

Upgrade Note:

- DSM Consumption Operator v9.0.0.0

If you are upgrading from v2.2.X to 9.0.0.0, do not uninstall the existing version and do not change the values yaml. Instead, we highly recommend contacting GSS for guidance and support. This will ensure a smooth upgrade process and prevent potential disruptions. For additional help, please refer to the support documentation or reach out to our technical support team. - DSM Consumption Operator v2.2.X

Earlier versions of the DSM Consumption Operator, including v1.1.0, v1.1.1, v1.1.2 and v1.2.0 are deprecated and should not be used for new Supervisor Service installation. If you are upgrading from these older versions to v2.2.X, do not uninstall the existing version. Instead, we highly recommend contacting GSS for guidance and support. This will ensure a smooth upgrade process and prevent potential disruptions. For additional help, please refer to the support documentation or reach out to our technical support team.

Secret Store Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Secret Store Service is a comprehensive solution for managing secrets in vSphere, ensuring the security and integrity of the environment and providing a robust and scalable solution for securely injecting secrets into workloads.

Secret Store Service Versions

- v9.1.0.0

- v9.0.0

Secret Store Service sample values.yaml

- Sample values can be downloaded from the same location as service yaml. Make sure to fill the property

storageClassNamewith storage policy name.

ArgoCD Service

Please refer to How to find and install Supervisor Services to find and install supervisor services.

Argo CD empowers teams to deliver applications with speed and precision by continuously synchronizing Git-defined desired state with live environments.Argo CD, a leading declarative GitOps continuous delivery tool, revolutionizes how teams deploy and manage applications. It champions a paradigm where the desired state of applications and infrastructure is explicitly defined in Git repositories. This "Git-defined desired state" serves as the single source of truth, offering unparalleled transparency, version control, and auditability for deployments. ArgoCD Service provides the entire lifecycle of Argo CD instance, including create, delete, upgrade ArgoCD and update its configurations. It gives both platform teams and developers access to automated, version-controlled delivery pipelines—whether they’re managing vSphere Kubernetes Service (VKS) clusters, VMs, vSphere Pods on supervisor cluster or workloads on VKS clusters.

- Service installation and Configuration documentation

ArgoCD Service Versions

- v1.1.0

- v1.0.1

- v1.0.0

SRE Supervisor Role

The SRE Supervisor Role Service facilitates the configuration of specialized ClusterRoles and ClusterRoleBindings tailored for auditing and debugging. By streamlining access to the Supervisor Cluster and its services, it ensures that SREs and Platform Operators can manage resources securely and effectively eliminating the need for direct SSH access into controlled systems.

SRE Supervisor Role Service Versions

Supervisor Services Labs Catalog

Experimental

The following Supervisor Services Labs catalog is only provided for testing and educational purposes. Please do not use these services in a production environment. These services are intended to demonstrate Supervisor Services' capabilities and usability. VMware will strive to provide regular updates to these services. The Labs services have been tested starting from vSphere 8.0. Over time, depending on usage and customer needs, some of these services may be included in the core product.

WARNING - By downloading and using these solutions from the Supervisor Services Labs catalog, you explicitly agree to the conditional use license agreement.

WARNING - All Supervisor Services Labs are unsigned and should be used in conjuction with the descriptions above.

Headlamp

Headlamp is an easy-to-use and extensible Kubernetes web UI. Headlamp was created to blend the traditional feature set of other web UIs/dashboards (i.e., listing and viewing resources) with additional functionality. Headlamp can be used in-cluster, via a web browser, or as a desktop application (using the information defined in the user’s kubeconfig). For a detailed description of Headlamp, visit Headlamp

Headlamp Versions

- Download latest version: Headlamp 0.41.0

Headlamp Sample values.yaml

Usage:

- The current Supervisor Service uses an in-cluster approach, providing users with an easy-to-use web UI. It needs Internet access to download the Headlamp image and the CertManager and ClusterAPI plugins. The CertManager plugin provides certificate management and observability, while the ClusterAPI plugin manages VKS clusters via the Headlamp UI. Future versions will let users download these images and binaries from an airgapped environment.

- The

values.yamlfile is optional. If you want to provide your own TLS certificate and key, you can override the default self-signed certificate using these options. TLS certificates and keys must be BASE64 encoded. - Headlamp is now exposed as a

LoadBalancerservice. In future versions, you will be able to use an L7 object to front-end the service. Get the LoadBalancer IP with this command:

kubectl get service headlamp-supervisor -n svc-headlamp-domain-xxxx

- Get the token from your

kubeconfigfile - with the current context set to the Supervisor.

kubectl config view --flatten --minify -o jsonpath='{.users[0].user.token}'

- Open a browser to the service's IP address and enter the token to successfully authenticate.

- The number of clusters in the Supervisor can affect how long it takes the ClusterAPI plugin to become fully functional. Make sure the ClusterAPI plugin is installed for cluster management. For more details, visit here

External Secrets Operator

External Secrets Operator is a Kubernetes operator that integrates external secret management systems like AWS Secrets Manager, HashiCorp Vault, Google Secrets Manager, Azure Key Vault, IBM Cloud Secrets Manager, CyberArk Conjur, etc. The operator reads information from external APIs and automatically injects the values into a Kubernetes Secret. For a detailed description of how to consume External Secrets Operator, visit External Secrets Operator project

External Secrets Operator Versions

- Download latest version: External Secrets Operator v0.9.14

External Secrets Operator Sample values.yaml - None

- We do not provide this package's default

values.yaml. This operator requires minimal configurations, and the necessary pods get deployed in thesvc-external-secrets-operator-domain-xxxnamespace.

Usage:

- Check out this example on how to access a secret from GCP Secret Manager using External Secrets Operator here

RabbitMQ Cluster Kubernetes Operator

The RabbitMQ Cluster Kubernetes Operator provides a consistent and easy way to deploy RabbitMQ clusters to Kubernetes and run them, including "day two" (continuous) operations. RabbitMQ clusters deployed using the Operator can be used by applications running on or outside Kubernetes. For a detailed description of how to consume the RabbitMQ Cluster Kubernetes Operator, see the RabbitMQ Cluster Kubernetes Operator project.

RabbitMQ Cluster Kubernetes Operator Versions

- Download latest version: RabbitMQ Cluster Kubernetes Operator v2.8.0

RabbitMQ Cluster Kubernetes Operator Sample values.yaml -

- Modify the latest values.yaml by providing a new location for the RabbitMQ Cluster Kubernetes Operator image. This may be required to overcome DockerHub's rate-limiting issues. The RabbitMQ Cluster Kubernetes Operator pods and related artifacts get deployed in the

svc-rabbitmq-operator-domain-xxnamespace.

Usage:

- Check out this example on how to deploy a RabbitMQ cluster using the RabbitMQ Cluster Kubernetes Operator here

- For advanced configurations, check the detailed reference.

Redis Operator

A Golang-based Redis operator that oversees Redis standalone/cluster/replication/sentinel mode setup on top of Kubernetes. It can create a Redis cluster setup using best practices. It also provides an in-built monitoring capability using Redis-exporter. For a detailed description of how to consume the Redis Operator, see the Redis Operator project.

Redis Operator Versions

- Download latest version: Redis Operator v0.16.0

Redis Operator Sample values.yaml -

- We do not provide this package's default

values.yaml. This operator requires minimal configurations, and the necessary pods get deployed in thesvc-redis-operator-domain-xxxnamespace.

Usage:

- View an example of how to use the Redis Operator to deploy a Redis standalone instance here

- For advanced configurations, check the detailed reference.

KEDA

KEDA is a single-purpose and lightweight component that can be added into any Kubernetes cluster. KEDA works alongside standard Kubernetes components like the Horizontal Pod Autoscaler and can extend functionality without overwriting or duplication. With KEDA you can explicitly map the apps you want to use event-driven scale, with other apps continuing to function. This makes KEDA a flexible and safe option to run alongside any number of any other Kubernetes applications or frameworks. For a detailed description of how to use KEDA, see the Keda project.

KEDA Versions

- Download latest version: KEDA v2.13.1 Note: This version supports Kubernetes v1.27 - v1.29.

KEDA Sample values.yaml -

- We do not provide this package's default

values.yaml. This operator requires minimal configurations, and the necessary pods get deployed in thesvc-kedaxxxnamespace.

Usage:

- View an example of how to use KEDA

ScaledObjectto scale an NGINX deployment here. - For additonal examples, check the detailed reference.